Production algorithmic trading system for perpetual futures — multi-account, event-driven architecture with dynamic progressive grid and 10-level risk

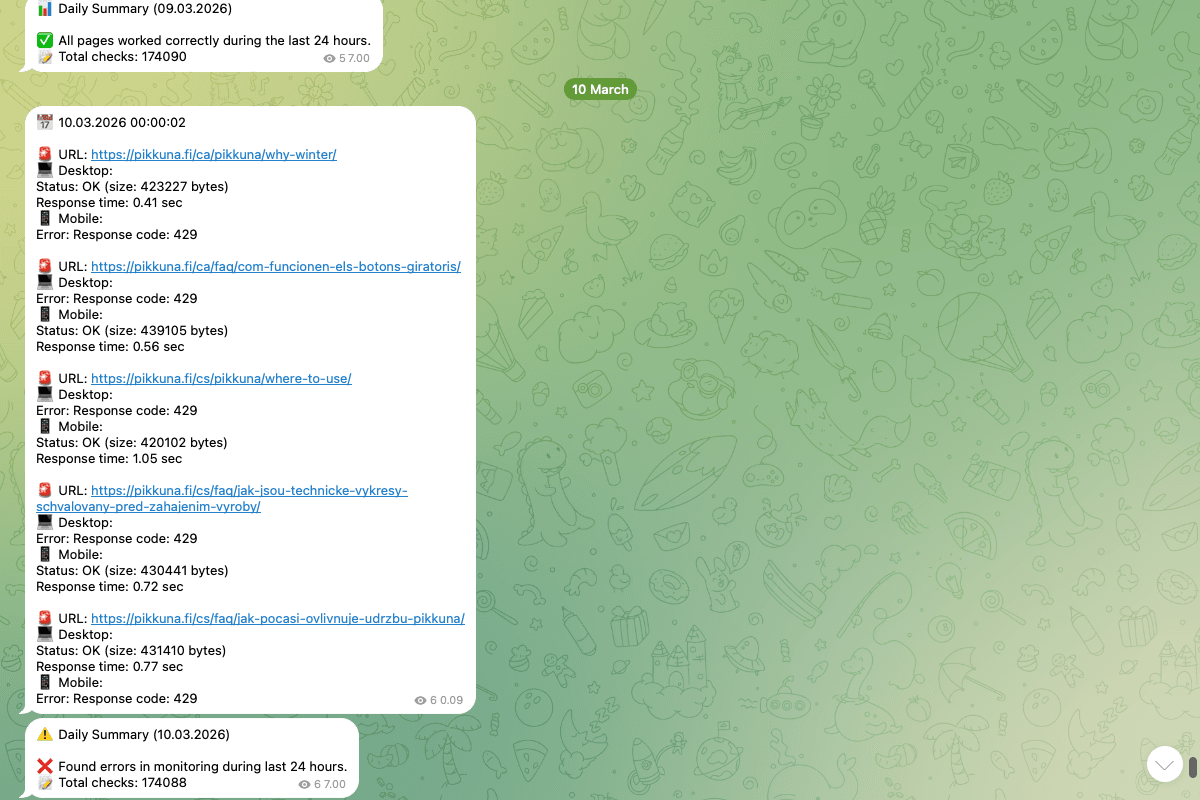

Site Pulse — Open-Source Website & Domain Health Monitor

Open-source Python tool that discovers URLs from sitemap.xml, validates each in desktop and mobile modes with parallel checks, monitors domain registrations via WHOIS, and delivers instant Telegram alerts.

Key Results

- Multi-TLD support — WHOIS monitoring for unlimited domain extensions

- 10 workers — parallel health checks via ThreadPoolExecutor

- Desktop + Mobile — dual device validation per URL

- 90-day warning — proactive domain expiration alerts

The Challenge

Any website with a non-trivial number of pages faces the same monitoring gap: you can ping a homepage, but you can't manually check every URL that sitemap.xml knows about — especially across both desktop and mobile. Add domain registrations across multiple TLDs to the mix and the surface area grows fast. Missed renewals, silent nameserver changes, and pages that return 200 with an empty body are all failure modes that standard uptime monitors miss entirely.

The Solution

I built an automated monitoring system with three core components:

- Dynamic URL discovery — parses sitemap.xml with automatic XML namespace detection, so monitoring always reflects the current site structure

- Dual-mode health checks — validates every URL in both desktop and mobile modes using realistic User-Agent headers, with retry logic and response size thresholds

- Domain watchdog — monitors WHOIS records for expiration dates and nameserver changes across all TLDs, alerting 90 days before expiry

- Telegram alerts — instant notifications with automatic message chunking and granular controls (success/warning/error toggles)

- Log analyzer — post-check analysis that groups pages by base path and surfaces the slowest, most unstable, and largest responses

Concurrent Page Health Checks

Parallel URL validation using ThreadPoolExecutor with session reuse and conditional Telegram notifications:

# website_monitor.py

def check_pages() -> None:

"""Check all websites from the list"""

timestamp = datetime.now().strftime("%d.%m.%Y %H:%M:%S")

messages_to_send = []

with requests.Session() as session:

def check_with_session(url):

return check_single_page(url, session)

with ThreadPoolExecutor(max_workers=MAX_WORKERS) as executor:

future_to_url = {

executor.submit(check_with_session, url): url

for url in URLS_TO_CHECK

}

for future in concurrent.futures.as_completed(future_to_url):

message, is_success, is_warning, is_error = future.result()

if (is_success and NOTIFY_SUCCESS) or \

(is_warning and NOTIFY_WARNING) or \

(is_error and NOTIFY_ERROR):

messages_to_send.append(message)

if messages_to_send:

message = f"📅 {timestamp}\n\n" + "\n\n".join(messages_to_send)

send_telegram_message(message)Result: 10 parallel workers with session reuse, configurable notification granularity, automatic message aggregation.

Desktop & Mobile Validation with Retry

Each URL is checked in both device modes with automatic retry on failure and response size validation:

# website_monitor.py

def check_single_page(url: str, session: requests.Session) -> tuple:

def try_request(headers, device_type):

start_time = time.time()

response = session.get(

url,

timeout=(CONNECT_TIMEOUT, READ_TIMEOUT),

headers=headers

)

content_length = len(response.content)

elapsed_time = time.time() - start_time

if response.status_code == 200 and content_length >= MIN_RESPONSE_SIZE:

return response, content_length, elapsed_time, None

else:

if response.status_code != 200:

raise Exception(f"Response code: {response.status_code}")

else:

raise Exception(f"Response size too small: {content_length} bytes")

# Desktop check with retry

try:

try:

response, content_length, elapsed_time, _ = try_request(

DESKTOP_HEADERS, "Desktop"

)

except Exception:

time.sleep(5)

response, content_length, elapsed_time, _ = try_request(

DESKTOP_HEADERS, "Desktop"

)Result: Catches both HTTP errors and suspiciously small responses (e.g. empty pages returning 200), with a 5-second retry delay.

Domain Expiration & Nameserver Monitoring

WHOIS-based domain check that validates expiration dates and verifies nameservers match expected configuration:

# website_monitor.py

def check_domain(domain: str) -> tuple[str, bool]:

"""Check domain registration expiry and nameservers"""

w = whois.whois(domain)

if isinstance(w.expiration_date, list):

expiry_date = min(w.expiration_date)

else:

expiry_date = w.expiration_date

days_until_expiry = (expiry_date - datetime.now()).days

domain_ns = [ns.lower() for ns in w.name_servers]

our_ns = [ns.lower() for ns in OUR_NAMESERVERS]

using_our_ns = any(ns in domain_ns for ns in our_ns)

messages = []

has_warning = False

if days_until_expiry <= EXPIRY_WARNING_DAYS:

messages.append(f"⚠️ Domain will expire in {days_until_expiry} days")

has_warning = True

if not using_our_ns:

messages.append(f"⚠️ Domain is using external nameservers")

has_warning = TrueResult: Handles WHOIS quirks (some registrars return lists instead of single dates), monitors both expiration and DNS configuration drift.

Results

| Metric | Value |

|---|---|

| Codebase | ~757 lines Python |

| Parallel workers | 10 (ThreadPoolExecutor) |

| Device modes | Desktop + Mobile per URL |

| Domain monitoring | Any number of TLDs via WHOIS |

| Expiry warning | 90 days before expiration |

| Scheduling | Cron-based, fully automated |

The system runs unattended via cron, dynamically adapting to sitemap changes and delivering Telegram alerts within seconds of detecting an issue — whether it's a down page, a slow response, or an expiring domain.

Available

AvailableNeed something similar?

I build custom solutions — from APIs to full products. Let's talk about your project.

Related projects

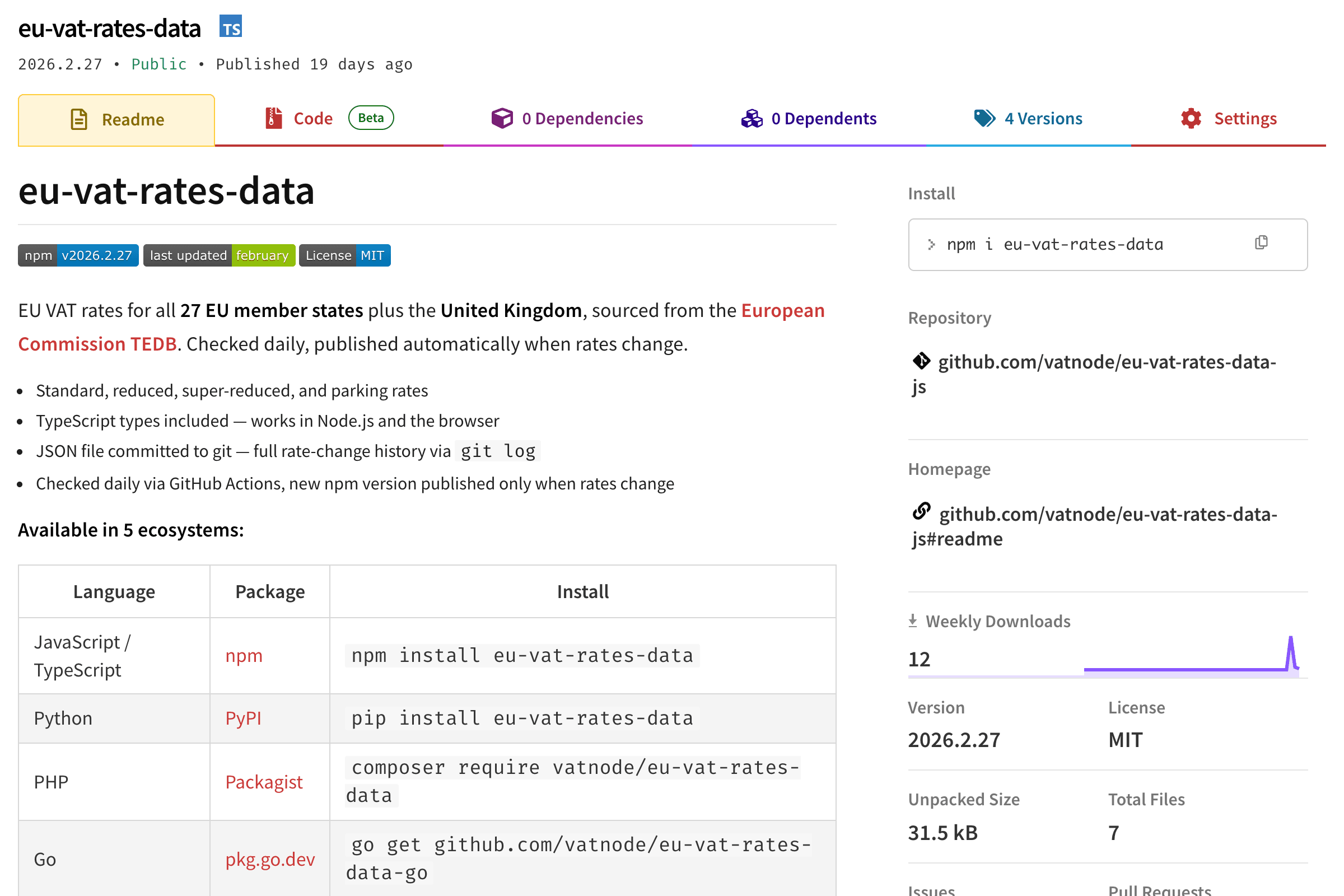

Free, open-source EU VAT rates for all 27 member states + UK. Published as native packages for npm, PyPI, Packagist, Go, and RubyGems.